Keeping Honest: Solving the Oracle Problem

Smart contracts have become a new paradigm of writing programs on the blockchains. However, like all computer programs, smart contracts are only as useful as their inputs and outputs from the outside world.

An example of such a smart contract is World Cup betting. Let’s say we open up a betting pool for England vs Croatia in the World Cup Finals. Everyone sends their Ether into the betting smart contract, which records everyone’s bets. This smart contract then requires the result of the match from the outside world, because it has no knowledge of the game. The creators of the smart contract could opt to use the official FIFA website for match results. When a result is announced by the official FIFA website, the smart contract is triggered to release funds to the winners of the bet.

In this scenario, the financial outcomes of the participants hinge on the data source for the match’s result, and therein lies the problem. That data source is thus a trusted third party, who could potentially behave in a dishonest way for its financial gain. For example, owners of the data source could take a bet on either side of the game, as long as they announce a result that favors them. This means that they are able to rig the bet to their favor each time.

If we aren’t able to rely on an outside data source, could we instead rely on the creators of the smart contract to report the results correctly? You can — except that this rolls us back to centralized reporting systems, which defeats the purpose of using a smart contract in the first place. This is the Oracle Problem in smart contracts.

The Oracle Problem

The Oracle Problem is this — no external data from the outside world can be brought into the decentralized systems without trusting a third party as an oracle. Once you have an oracle dictating the data inputs into your decentralized system, there is little to no benefit for your application being decentralized.

In my opinion, the Oracle Problem is the single largest problem to be solved in any programmable, decentralized system. Any system that manages to solve the problem in a trustless and secure manner will reap the benefits for years to come.

To solve this problem, instead of relying on trusted third parties as oracles in the system, many projects use methods that analyze the submissions of the participants of the network and their behaviors. This blog post explores some of these methods of arriving at a correct data input.

Schelling Point

Schelling points are focal points of the system where the majority of the participants arrive. An example of a Schelling point at work: ask 100 random people on the street what comes to mind when “San Francisco” is mentioned, and you will find that a good majority of them answer “Golden Gate Bridge.”

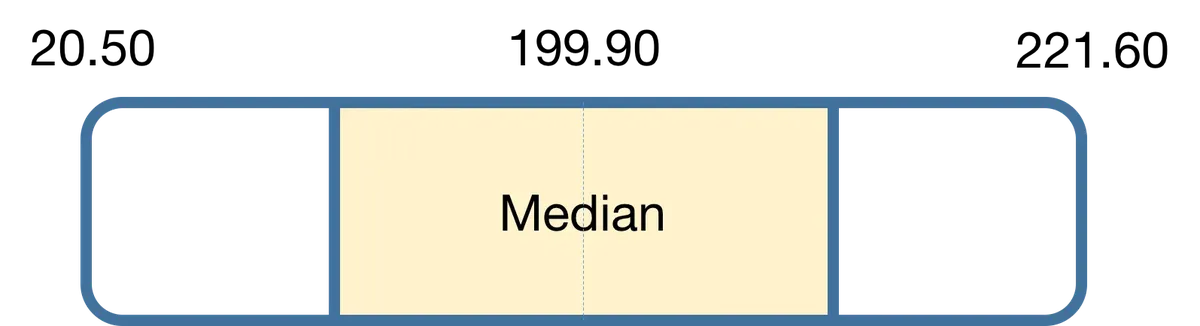

In a more concrete example, Vitalik Buterin introduced SchellingCoin. In this scheme, all participants will submit what they think is the correct input, but only the median of the submissions will be accepted as correct. For example, if we want to get the current price of Apple (AAPL) into the system, we query the whole network of participants to input what they think is the current Apple stock price.

In the figure above, only submissions of Apple’s stock that are in the 1st quartile to 3rd quartile are paid for reporting, while the other reporters are not paid.

Why is the Schelling point so effective in arriving at the correct answer? There is a second-order effect called the Keynesian Beauty Contest. Participants are incentivized to correctly guess what the other participants are guessing, since “fitting in” is the best strategy. In the absence of communication between participants, they will go with their best bet to fit in — the truth.

However, this scheme also has some implications:

- Accepting submissions that are between the 1st–3rd quartile means that if the majority of the participants go rogue and report that Apple’s stock price is $1.00, it will be accepted as the correct answer.

- This scheme ignores the fact of Sybil attacks. Should an adversary take control of the majority of the system’s submissions, they can set the median of the data, and essentially manipulate the market to their liking.

To have the system work, the incentives of colluding with other participants must be less than the incentive for reporting correctly. Bitcoin solves this by making it more profitable to mine new coins than to collude. In a Schelling point system, it is not always clear that the profits of reporting correctly all the time are more than the profits of undermining the reporting system. This has to be evaluated on a case-by-case basis — which I will illustrate in the Augur forking example below.

Disputes & Forking

Prediction markets like Augur and Gnosis aim to solve the Oracle Problem. This is because a wrong outcome reported will hurt the financial well-being of honest market participants. To mitigate this, they have dispute rounds for participants to open a dispute about the reported outcome of a market. This dispute round continues until a certain threshold of votes is achieved, at which point the network arrives at a fork.

For example, given a market with only two outcomes — Will Donald Trump become the President of the United States? After the elections, the reporter correctly reports that Trump is indeed elected POTUS. However, some market participants choose to go rogue and create a dispute in the system with sufficient votes. The network forks into two separate forks:

- The fork that Trump is correctly reported as elected President.

- The fork that he is not elected.

These forks are completely disjoint, and participants cannot transfer tokens between forks. In the event of a fork, token holders must choose which fork to migrate their tokens into. In this example, there is a “Donald Trump” fork and a “Hillary Clinton” fork. This event is final and has major consequences if a token holder fails to choose what the majority decides.

Here, we rehash the Keynesian Beauty Contest: How can I, as a token holder, choose the fork where my tokens will have the highest value? The fork where the majority of token holders migrate will naturally be the more valuable one.

Other factors to consider when migrating into a new fork include market activity. In prediction markets, traders are unlikely to trade in a fork founded on untruths, and trading there would be marred by negative sentiment. Market makers might not create markets if trading volumes are low. Hence, token holders are incentivized to choose the fork where the truth holds.

Probabilistic Correctness

Probabilistic correctness is a method of arriving at the correct answer most of the time. One way of doing this is redundancy.

A common use case for achieving probabilistic correctness through redundancy is the outsourcing of computation tasks to untrusted computers. Let’s say an organization wants to delegate a task of scientific computations. The system will randomly assign k participants (or nodes) to perform the computation redundantly, and the final outputs are aggregated by the system.

For our example, we set k = 10, so there are 10 participants performing the task redundantly. The system will first uniquely identify the outputs of all the participants, grouping them by their outputs. Let’s say the outputs are bit arrays, and we have 3 distinct outputs:

GroupA = [0, 0, 0]

GroupB = [0, 0, 1]

GroupC = [0, 1, 1]

The system counts how many participants submitted each group. Suppose:

- GroupA count = 5

- GroupB count = 4

- GroupC count = 1

With Group A having 5 out of 10 submissions, the system can conclude that Group A is the majority and is a “close-enough” approximation to the truth. The system can decide its cut-off point for acceptance (it could be 10% or 50%, depending on data sparsity). The higher the variance of the data, the lower the majority cut-off point might be.

Note that k is a parameter to be tuned by the buyer (the person outsourcing the computation). To increase the probability of returning the correct output, the buyer can increase k. Though more participants can introduce more noise, it amplifies the Schelling point (performing the computation correctly). Achieving a higher probability of correctness thus requires paying more.

This still relies on more honest participants than dishonest ones in the system. Randomly selecting participants who do not know each other might thwart smaller collusion efforts, but may not suffice against larger, well-organized collusions.

Spot Checks

Spot checks are a method of encouraging honesty in the system as a whole. They are random honeypots in an outsourced task’s answer.

For example, the system randomly selects participants to input the closing stock prices of a few companies on a certain date:

Companies = [AAPL, GOOG, FB, AMZN]

KnownClosingPriceIndex = 'GOOG'

KnownClosingPrice = 1268.44

The system already knows or decided beforehand that Google’s (GOOG) closing stock price is 1268.44. This known answer has to be correct.

Next, the participants input the closing stock prices for the 4 companies. Below is an example of a participant’s submission

Answers = {

'AAPL': 207.21,

'GOOG': 1270.55,

'FB': 186.76,

'AMZN': 1886.44

}

Should a participant answer differently for Google’s closing stock price that is, not 1268.44, they are penalized for doing so and their stake could even be slashed. This fear of getting their stake slashed would be able to keep a percentage of the participants honest.

However, this is not sufficient for ensuring honesty in the system. Spot checks are easily gamed by spotting patterns in the system where the honeypot is always chosen. It is most effective when there the submission is large enough that the honeypot is hard to spot. This causes a strain to the buyer also — the buyer will have to supply honeypot answers to the network every single time they need a task to be completed.

Concluding thoughts

An examination of all honesty schemes in varying coins and systems show that there is no proven method for keeping oracles completely honest.

The largest caveat of these schemes are that they only achieve some probabilistic certainty on whether an answer is true or false, but does not provide any guarantees like any proof systems do. This is because asking questions like “Who won the Presidential Election in 2016” is not trivial, and cannot be cryptographically proven.

Thus, blockchains or networks are better off relying centralized oracle systems for general use cases. For the weather or stock prices, there are existing trusted data feeds out there to be utilized with Oraclize on the Ethereum blockchain.

For niche use cases, system designers will have to thread with care for these experiments. But, if they do well enough, the landscape of subjective oracle systems could be revolutionized.

Thank you Julian Koh for providing feedback on this post.